“Niche Museums“: finding small and sometimes weirdly specific museums near you… this is great, although only listing US-based museums, sadly.

The Stochastic Game

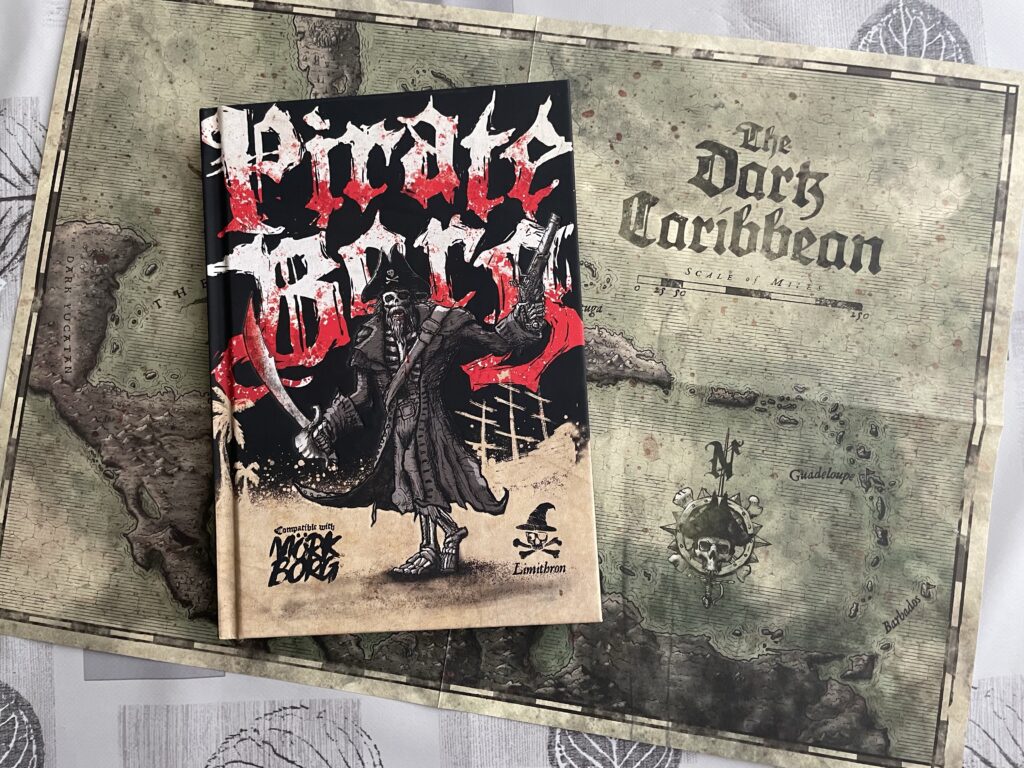

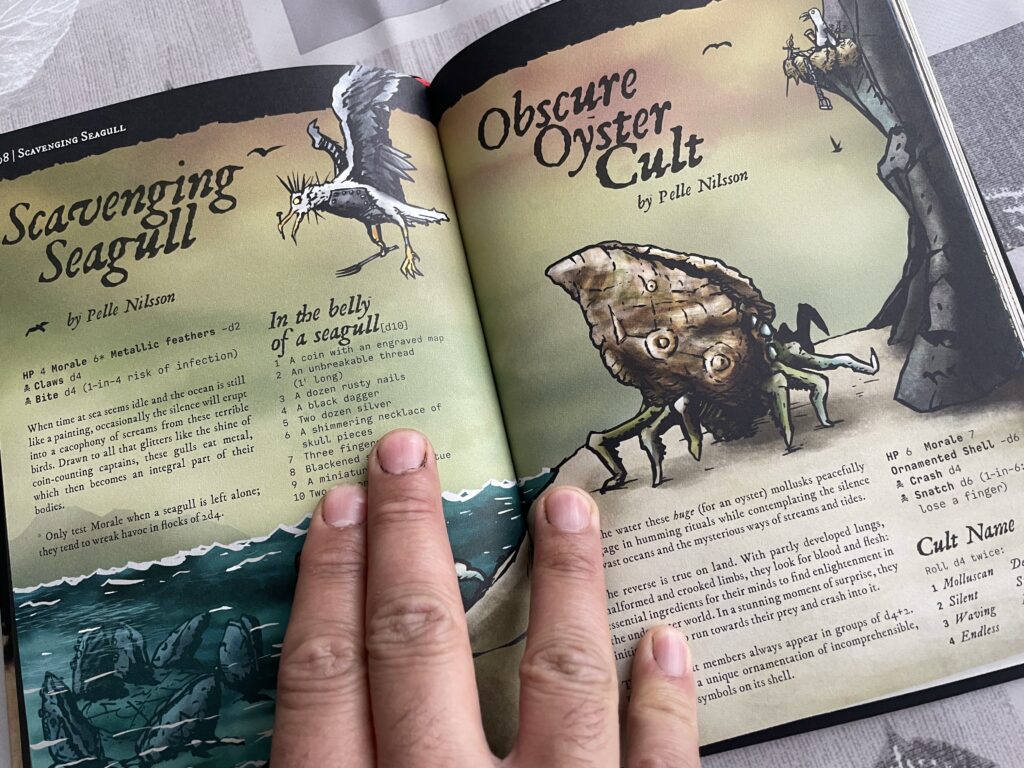

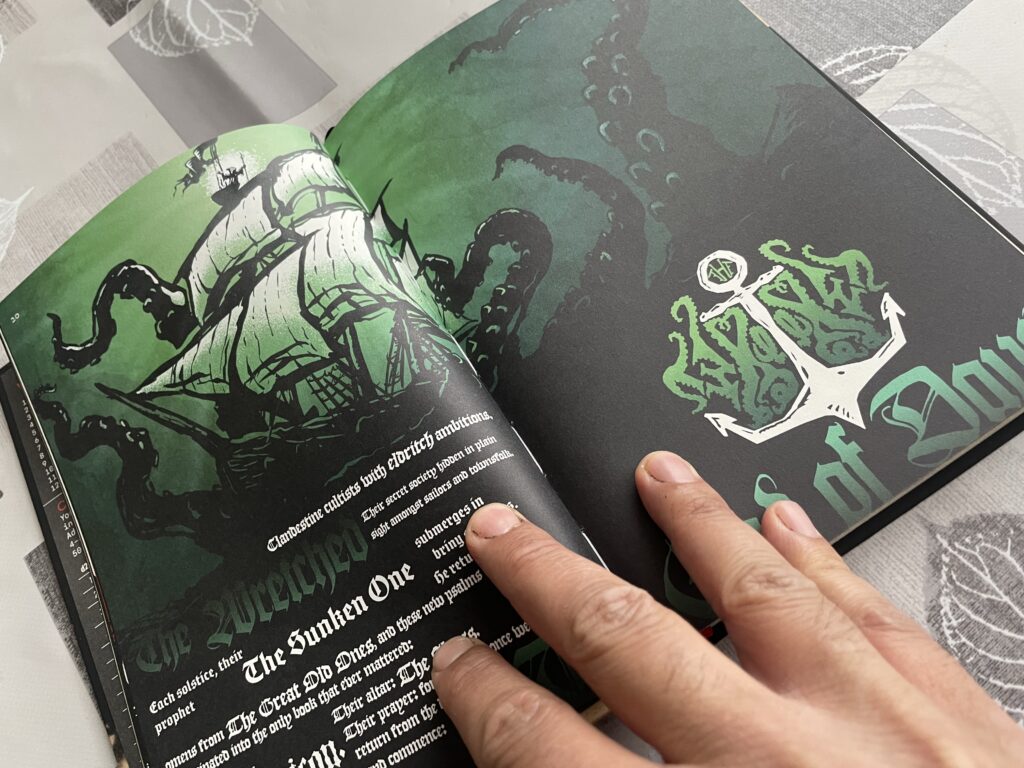

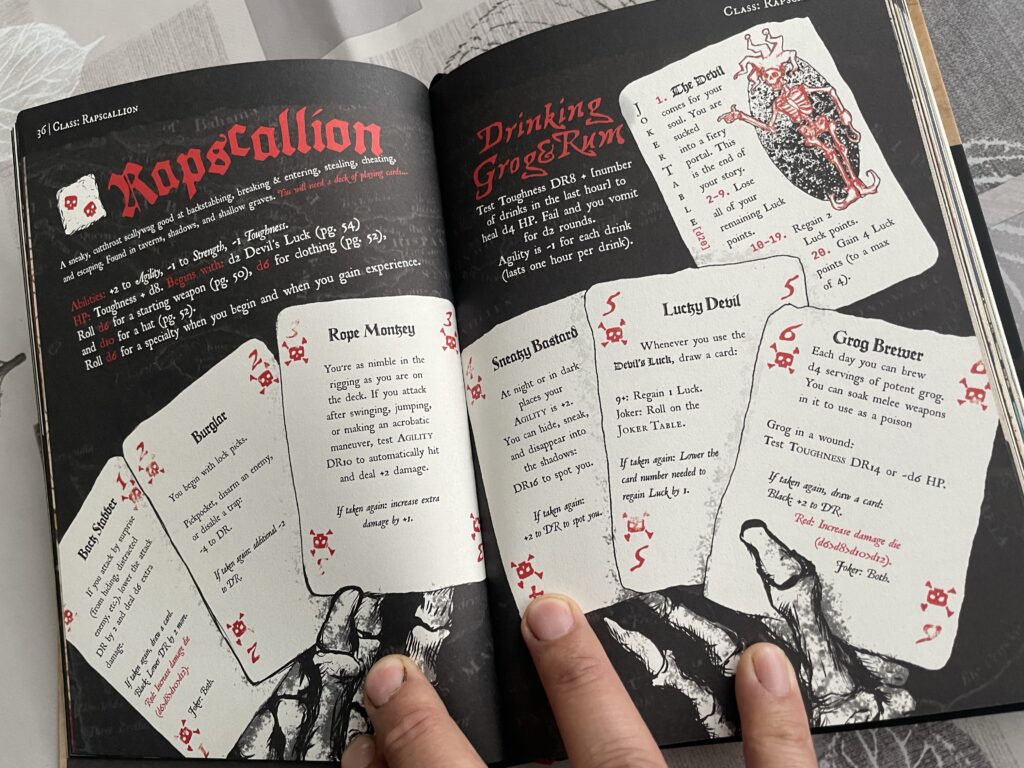

Pirate Borg has dropped anchor! And yes there are sea shanties mechanics! #ttrpg

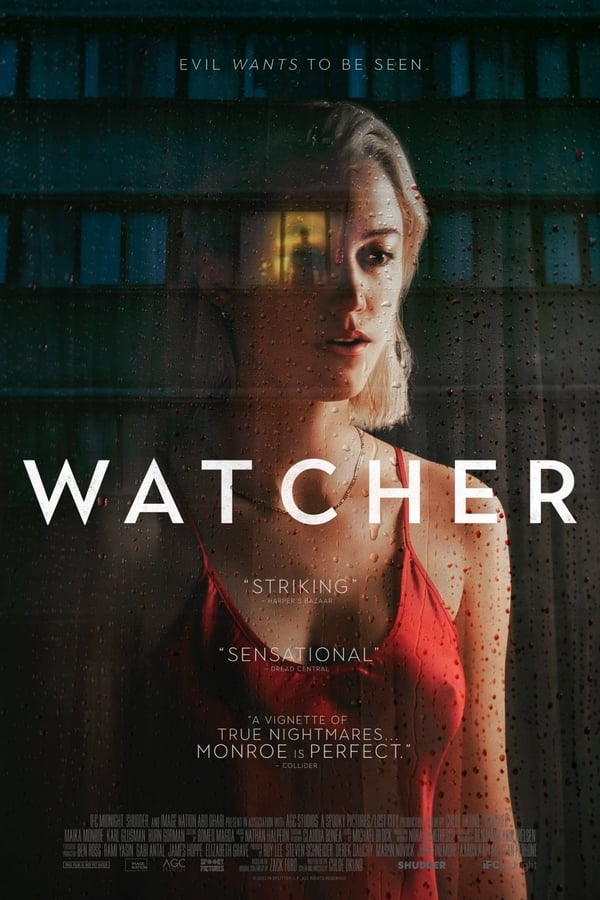

“Watcher” (2022) Another movie with a great atmosphere. Rear Window meets Lost In Translation with a big dose of creepiness and female anxiety. Good acting. Looking forward to more stuff from Chloe Okuno!

Oh hey I hadn’t noticed that Liquid Tension Experiment released a third album! And it has a prog-metal cover of Rhapsody in Blue! 🤩🤯

Writing a RuneQuest scenario about Gloranthan werewolves at night in a park where coyotes live is how you find inspiration right? #ttrpg

Me refactoring 1500 LOC since yesterday

“Sound of my Voice” (2011): great atmosphere but the characters don’t do much and IMHO there is little ambiguity about Maggie. In a similar theme (but vastly different style) I preferred “Faults” (2014)

French people know what’s up around this time of year… if you’re allergic to almonds, stay away from us this week!

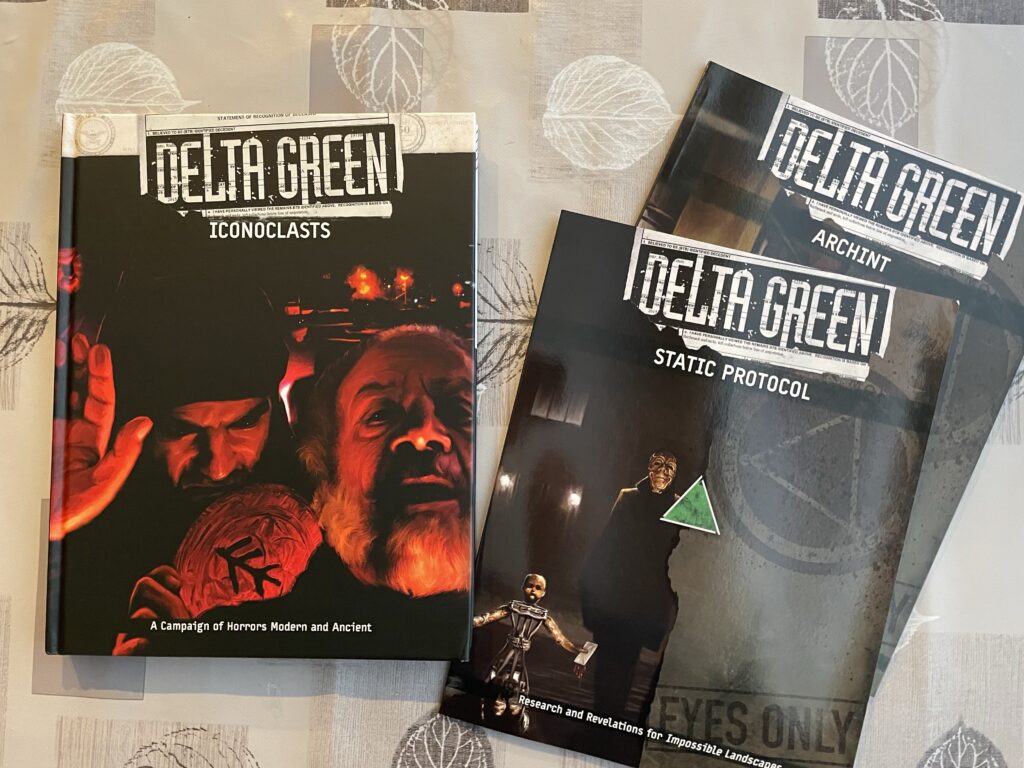

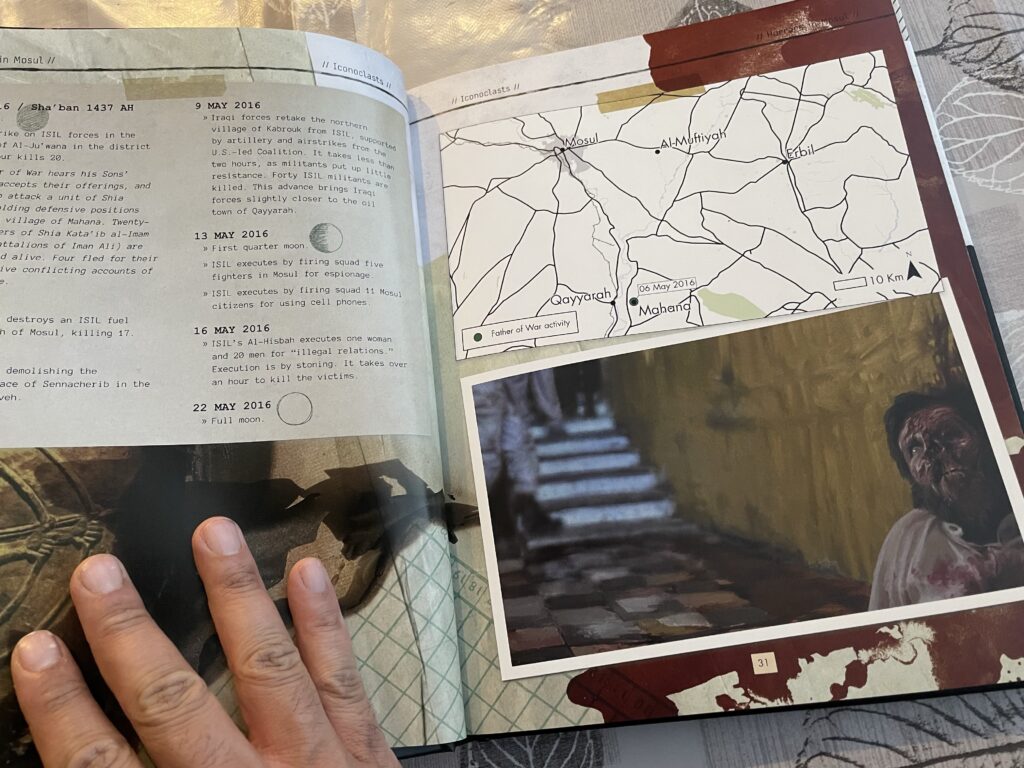

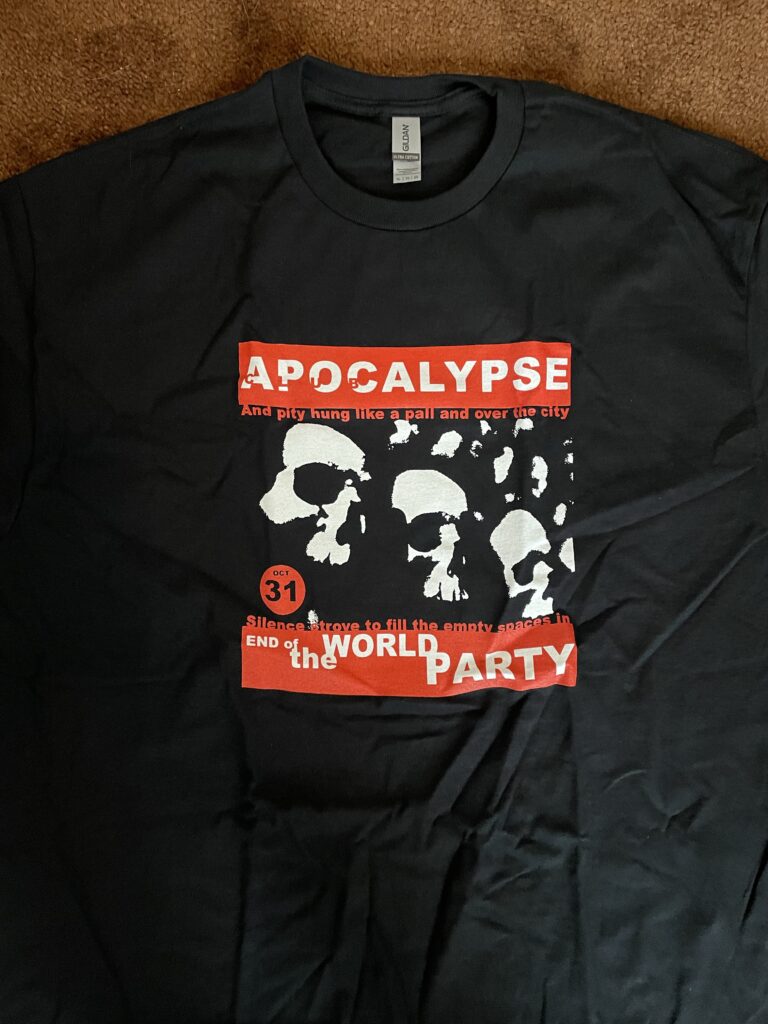

Delta Green dump from the local green box! Dossiers for ongoing operations, undercover attire for New York, and standard Canadian federal apparel!

Ugh this sucks. We added 20 years of copyright protection (it’s now up to the author’s death plus 70 years) to keep in line with American copyright laws, under NAFTA deals. Disney gotta Disney, I guess.