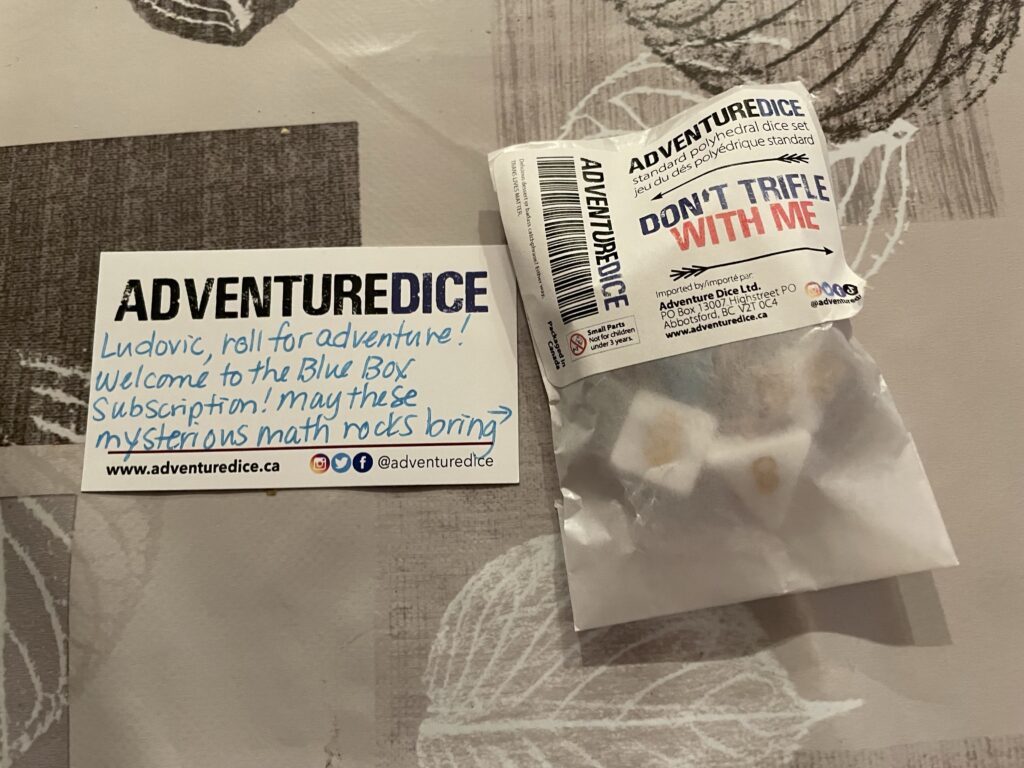

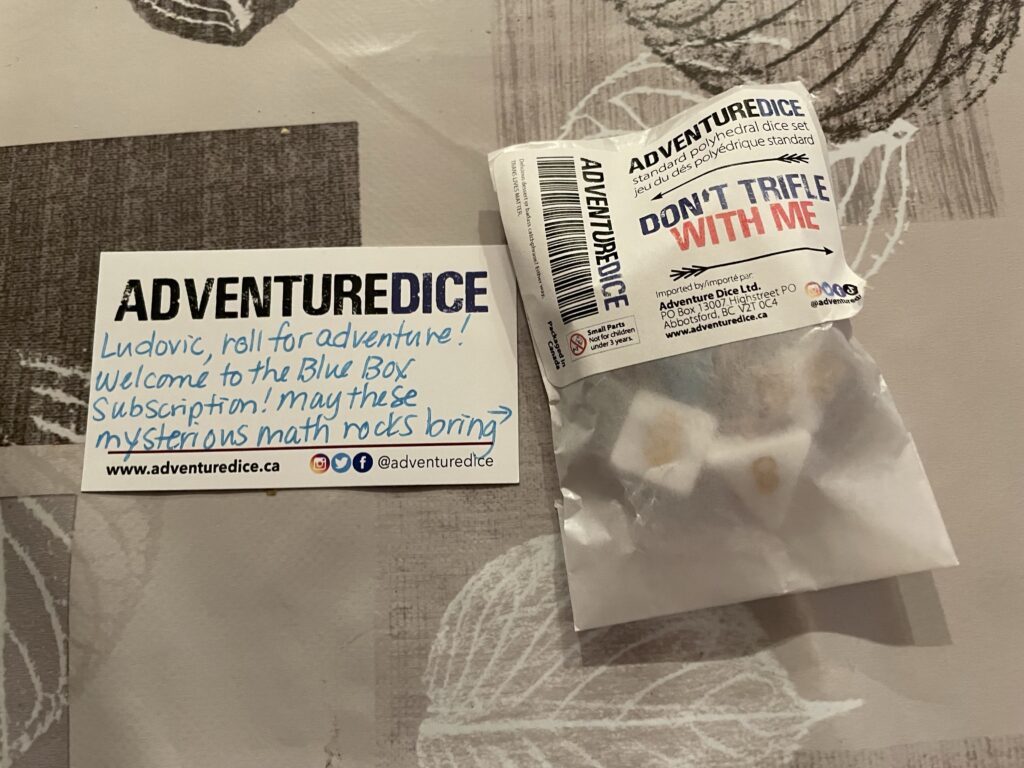

I got an Adventure Dice subscription as a birthday gift! The first dice look like very yummy candy and I want to eat them…

I got an Adventure Dice subscription as a birthday gift! The first dice look like very yummy candy and I want to eat them…

Good morning!

Finally got a patch for my trusted Goruck backpack! Looks nice and discreet. Hopefully a black Canadian flag doesn’t symbolize anything bad 😅

Dog life is hard

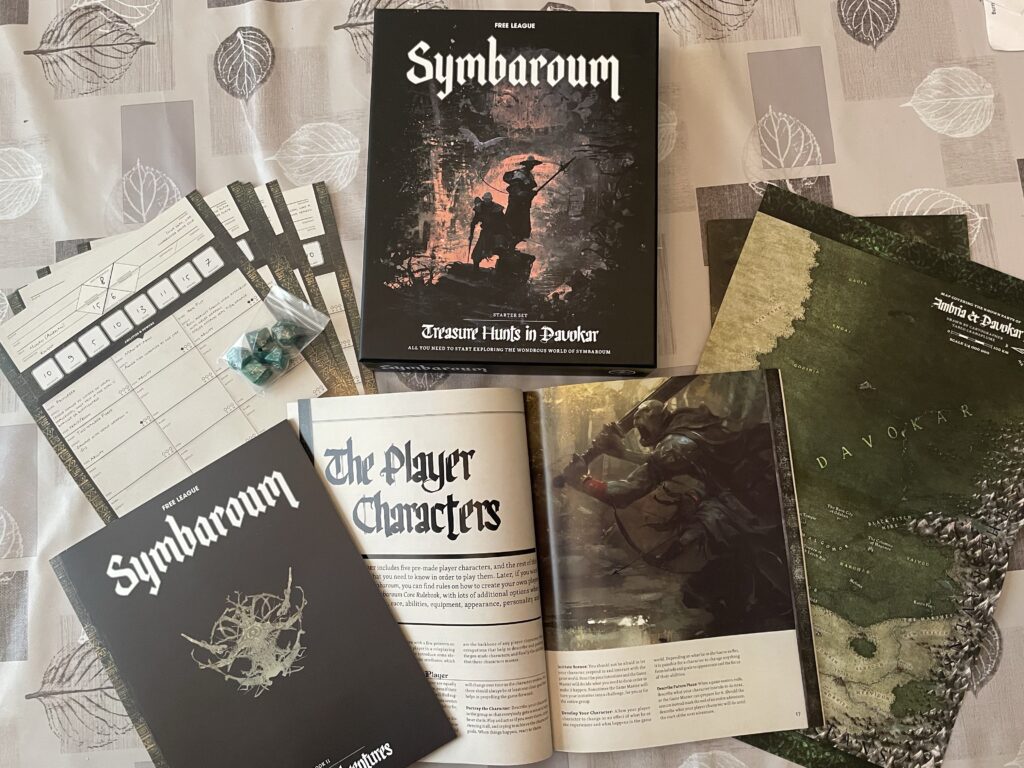

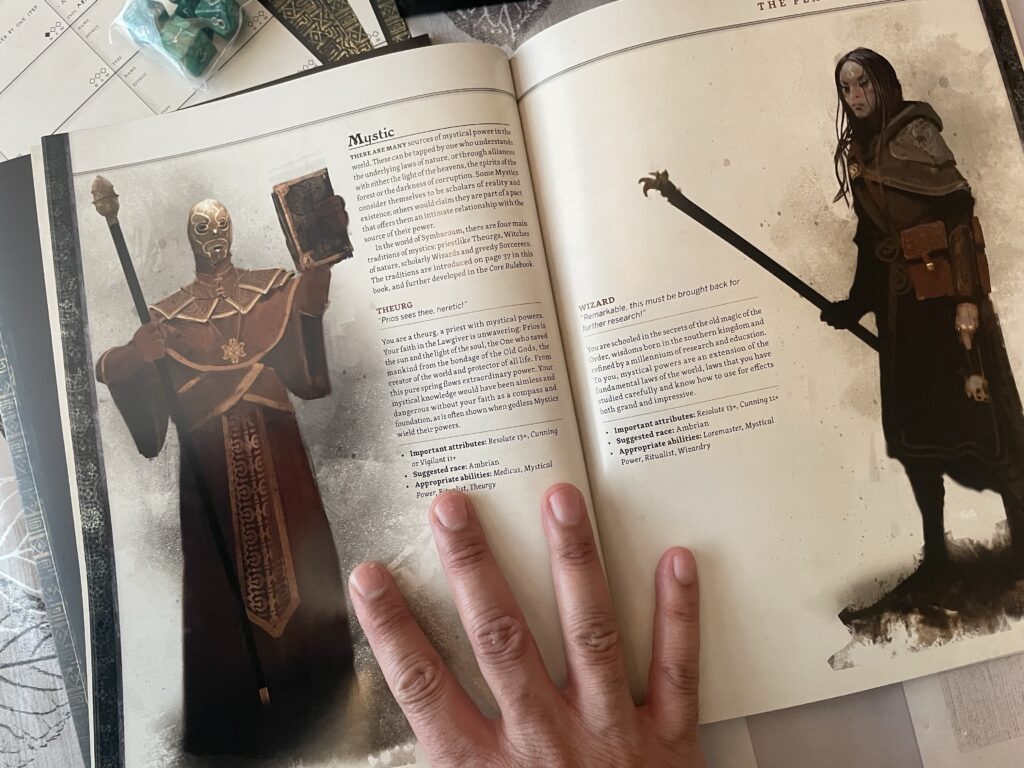

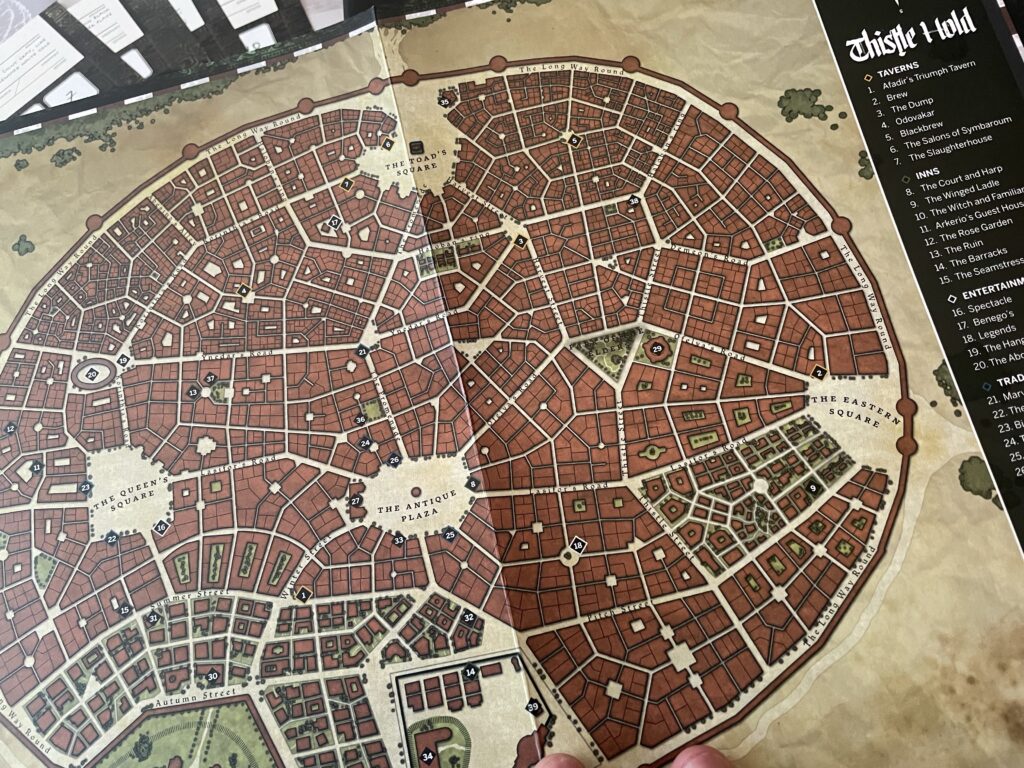

Currently reading the Symbaroum starter set! I don’t know much yet so still at the stage of reading about the setting and asking “sure but what do we do in this game?”

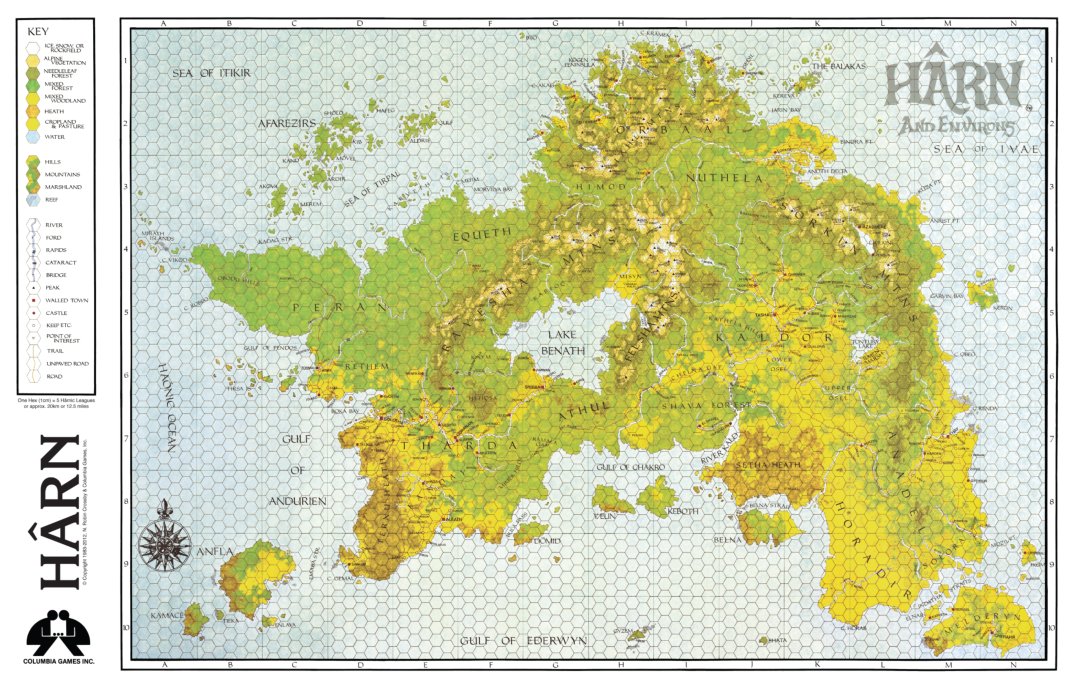

Woohoo the new Harn Kickstarter includes HarnWorld and Harndex, which means it’s perfect for newcomers! Plus, the famous map! Note that you can get any of the kingdom books as add-ons.

Youngest kid and I went to get a… (checks notes). Get a bucket of Taro milk bubble tea. Yeah.

I can believe Xena’s gravity defying backflips and chakram throwing skills, but then there’s also episodes like this, in which she teaches Hippocrates gangrene diagnosis, CPR, tracheotomy, C-sections, and more!

Carefully optimistic for old growth forest in British Columbia…

I’ve seen a few Batman fan-films, but this is the first one that I genuinely liked a lot. Great job to everybody involved!